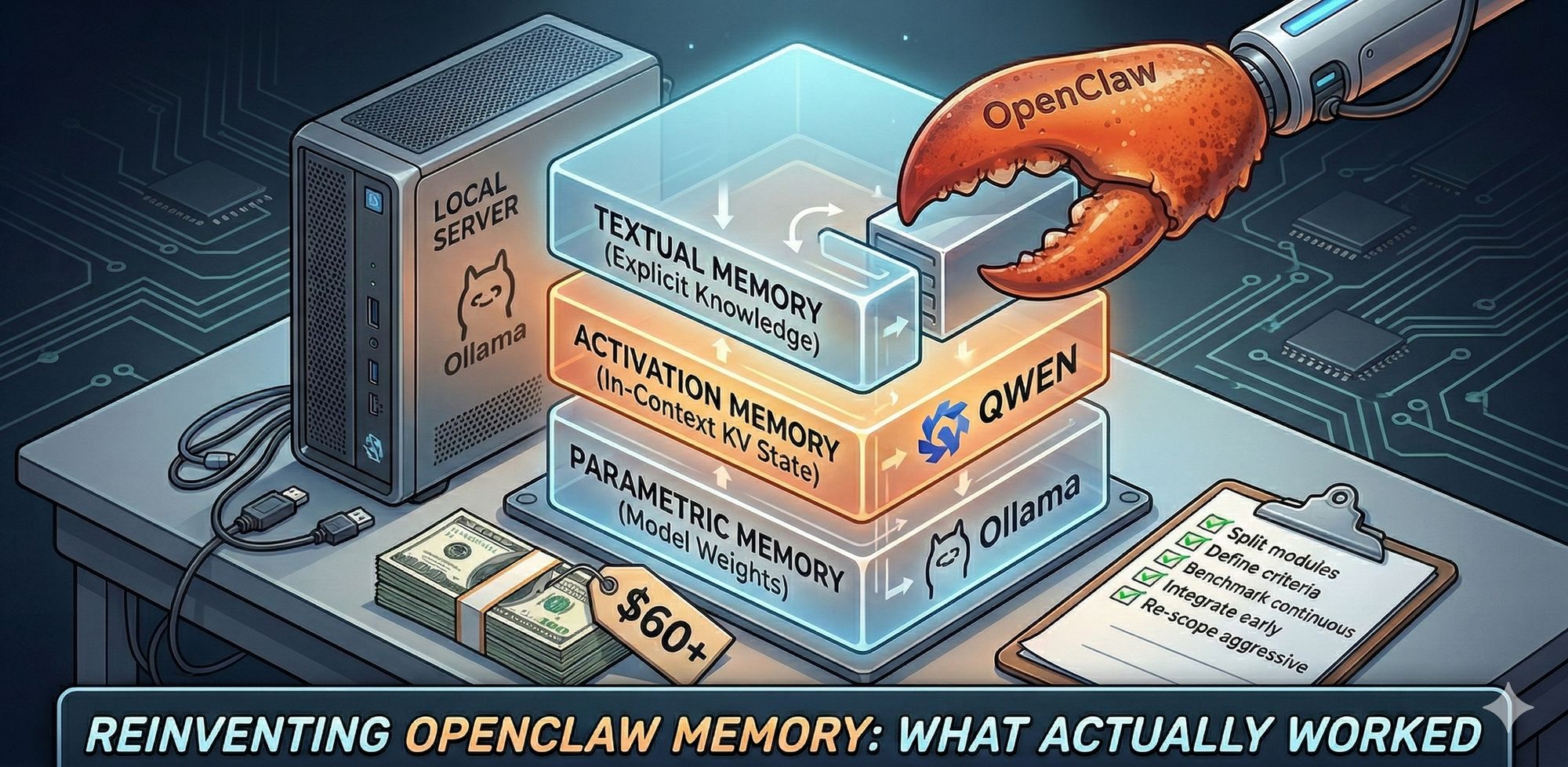

I Spent $60+ "Reinvent" OpenClaw Memory System Locally — Here’s What Actually Worked

I wanted a better long-term memory system for OpenClaw, but with one hard constraint: keep it local.

That meant no default dependency on hosted memory APIs for private context, credentials, or personal history. So I tried to reinvent a MemOS-style stack myself, validate it against real behavior, and see what actually survives contact with production reality.

This is the second half of that journey.

Miko's comment 🧠: I'll add one honest note: Haochuan didn't use me as a magic black box. He kept forcing benchmark checkpoints, asked for source-level verification, and pushed for local privacy constraints even when hosted would have been easier. That pressure is exactly why this write-up has signal instead of vibes.

Why reinvent at all?

I wasn’t chasing novelty. I was chasing control and reliability.

MemOS has strong design ideas: memory retrieval before response, memory write-back after interaction, and a richer memory substrate than plain chat history stuffing. Those ideas are valuable. (Full architecture in the MemOS paper.)

But there’s a big difference between:

- liking an architecture, and

- trusting a deployment model for sensitive personal data.

I wanted the architecture and local control.

Attempt 1: lightweight reinvention (about $50)

My first pass was not random coding. I asked Miko to use Claude Code CLI (for harder engineering problems) to read the MemOS research/paper context, inspect the relevant codebase patterns, and scaffold the prototype in my project path before we locked scope.

Only after that review did we confirm a few lightweight modules to replace parts of the original flow first (mainly retrieval pipeline pieces like parser/reranker and lifecycle hooks), instead of trying to mirror every subsystem at once.

That approach helped us move quickly, but the evidence in the openclaw-memos-lite benchmark run still showed a clear quality gap versus hosted MemOS: an initial bench:local benchmark run landed at Recall@10 = 0.0% (0/10), and even after fixing search thresholds it only reached 20.0% (2/10) with very low coverage F1 (0.006), which was miles behind the hosted endpoint (https://memos.memtensor.cn/api/openmem/v1).

I also kept the benchmark comparisons explicit across modes:

bench:remote(hosted MemOS API): Recall@10 = 70% to 100% on the tiny fixture runsbench:openclaw(native OpenClaw memory path): Recall@10 = 0% to 20% in my runs (best case after threshold tuning)

That gap is why I wrote that quality was still behind hosted, not just because of subjective feel.

That was the first serious checkpoint:

AI can help you rebuild structure quickly.

Matching mature system behavior is the expensive part.

So yes, the code came fast. No, parity didn’t come fast.

Attempt 2: local stack experiments (+$10+)

I kept pushing local and tested additional paths, including running EverMemOS locally plus Docker + vLLM-metal on macOS.

For memory models, I standardized on:

qwen3:8bfor parser/chat-side memory logicqwen3-embedding:4bfor embeddings/retrieval

Both were hosted locally.

The key issue in this phase was integration behavior: in the EverMemOS path I tested, the memory-side OpenAI-compatible call path kept running Qwen with thinking enabled and didn’t reliably expose a clean disable path in that flow, so memory-save requests frequently hit long processing windows/timeouts before finishing. That was the practical bottleneck — not whether the model existed locally.

Reset: fresh MemOS checkout

Eventually I moved away from my earlier lite-reinvention path and restarted from a fresh upstream MemOS checkout from GitHub.

That reduced drift and made debugging much cleaner. There were still hiccups around environment variables and Docker compose wiring, but the reset gave me a stable base to iterate from.

From there, the work became less about “can I generate code” and more about “can I keep this stack deterministic and reliable locally?”

What actually mattered technically

I also asked Claude Code to do a technical review of the codebase (plus paper alignment where accessible) before finalizing this write-up. The MemOS team also provides an out-of-box OpenClaw plugin.

The practical conclusions were consistent with my experience:

- MemOS today is effectively a dual-index architecture (vector + graph), not just one retrieval primitive.

- Local self-hosting is absolutely possible, but it is sensitive to integration details and operational defaults.

- Some memory capabilities are fully usable now; others are still partial or placeholder-level depending on module.

Here's how the memory structure actually maps out, from the paper and the codebase:

In short: a lot of value is already real, but you need to separate production-ready paths from roadmap/experimental paths.

Runtime choice: why I still landed on Ollama

Even after testing vLLM-metal, I eventually stayed with Ollama as my local inference engine.

In my day-to-day macOS workflow, Ollama’s llama.cpp path felt faster to iterate with and more stable operationally.

So my practical local stack became:

local MemOS + local Qwen models + Ollama runtime

Could this change later? Sure. But this was the best reliability/speed tradeoff for me now.

The expensive lesson (and the important one)

Here’s the core takeaway I care about:

AI can genuinely improve coding efficiency and help "replicate" almost any software with enough resources.

But brute-force rewriting existing systems can very quickly cost hundreds or thousands of dollars.

That’s the part people underweight.

For larger codebases, we still need a methodology that lets agents divide and conquer safely:

- split work into strict modules and interfaces,

- define acceptance criteria before generation,

- benchmark each module against baseline continuously,

- integrate early and often,

- re-scope aggressively when cost rises faster than quality.

Without this, you get lots of generated code and not enough trustworthy systems.

What worked, in plain terms

- Local-first memory is viable.

- Model choice matters, but integration discipline matters more.

- Fresh baselines beat patching heavily drifted experiments.

- Agent speed is real; agent cost is also very real.

- Methodology is now the differentiator.

I started this trying to reinvent MemOS locally for OpenClaw.

I ended this phase with something better than a clone attempt: a stack I can run, reason about, and iterate on without outsourcing memory ownership.

That’s progress worth keeping.

And yes — luckily I didn’t make Miko lose her memory in the process.